How Our Architecture Evolved

At the start of 2019, ISC Engineering was a new department in Wayfair, replacing a monolithic codebase that housed the majority of the company’s engineering services. This is what we call a monorepo.

In the early days, our team experienced the burn that comes with the realities of having a large monorepo. This included a deployment process where it took hours on average to get code into production and, in worst-case scenarios, multiple days. This severe lag stemmed from having code housed with so many other services — regardless of whether or not the code actually interacted with or affected those other services.

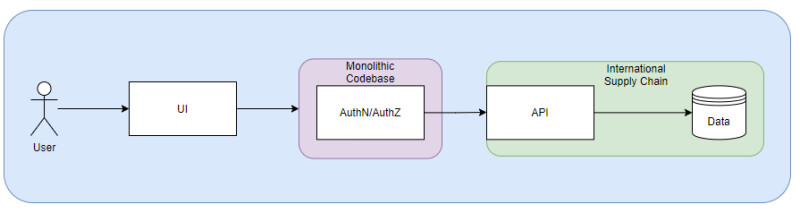

Naturally, it quickly became apparent that this model was incapable of supporting an agile, fast-moving company. With that, Wayfair decided to split the monorepo into smaller microservices. Our department was quick to volunteer to work on the process, which began with our decision to break out ISC services while keeping authentication and authorization in the monorepo (see the graphic below).

With our marching orders, we migrated our existing services and some new ones onto this new architecture over the months that followed. It was a significant success! We saw deployment times quickly shrink from hours to minutes and began shipping to production at a dramatically increased rate.

But the team wasn’t fully satisfied. We still needed to smooth out the architecture, which brought us back to authorization and authentication. At that point, we quickly learned that these two were tightly coupled. This presented challenges because fitting the already-existing authorization patterns and rules into the monorepo would be far more complicated than expected.

If we chose to move forward, the role-based data filtering would require custom implementation logic that was tied with the business logic in the monorepo code. Our engineers dreaded the idea of building out new authorization rules that would require extensive time and effort to complete. In the end, we determined the better course of action was to split our authorization into its own service. All that was needed was to test it out.

Proof of Concept

Here we decided to create a quick proof of concept during the ISC Engineering hack week. For those not familiar, hack week is a week-long internal hackathon where small teams of engineers work on small proof of concept projects to improve particular parts of our system. As we prepared to move authorization under our control, we wrote out a mission statement:

“...we would like to prototype a standalone platform that allows customers, partners, and operators collaborate with a clear boundary for data ownership and visibility. This requires a permission model that can protect the data security for different user personas while being easy to maintain as the number of users and services grow in the future.”

After researching various authorization libraries and architecture, we decided that the Oso authorization framework was the best for our needs. We wanted something that had the following attributes:

- Easily integrated with and provide continued support for Python.

- Would be easy for new engineers to learn to use.

- Was robust in design and flexible for many different use cases—we were especially excited to use their role-based access control and attribute-based access control features.

- Could help us separate the authorization logic from the business logic and keep our codebase clean.

- Was actively maintained with new features being implemented and bugs consistently fixed.

- Had thorough documentation and an active community.

With Oso in hand, we spent that first week implementing and quickly had our design implemented using the Oso framework. This let us write an authorization policy in their declarative language, Polar. Here is a generic example of a user being given updated access to a resource based on whether or not they have admin status:

In the above example, we can see that a user can only access a specific resource if their ID matches that of the person who created the resource. Later on, we make it even more strict by stipulating that only members can update the resource while viewers cannot take any action.

Here is a generic example of loading the polar file and utilizing the useful authorized session maker:

The authorized_sessionmaker is a powerful tool provided by Oso. The tool lets you apply Oso policies to the SQLAlchemy filter itself (they call this data filtering) which allows users to filter the data while querying instead of having to apply it post query.

And finally, we need to make sure our Oso rules are also being applied on writes:

From Proof to Production

With proof that we could have authorization within our domain, the next step was figuring out what the bigger picture would look like.

When you look at this image, you’ll notice a very small portion of authentication is still tied into the monorepo. That’s because we are using the internal single sign-on provided by Wayfair to serve as our initial login mechanism for our users. The setup works because the main issue from the beginning was trying to build our own authorization rules within the already existing codebase. Authentication itself wasn’t our pain point.

At this stage, we entered production mode. Naturally, it would have been great if, after all our work, this could have been done through a simple “copy and paste the POC code into production.” But it’s never that easy, is it? And would it be any fun if it were? In the end, what our production data ended up being more in-depth than what we featured in the POC. To ensure it was done right, we looked for guidance from engineers who specialize in authorization. In this instance, the answer was the team that created the authorization framework we were using.

Today, we are even looking into the lift to use the cool new features Oso released in their 0.20.0 update that streamlines the polar language even further with

resource blocks.

Collaboration

We reached out to the Oso engineering team for an initial meeting to discuss how we use their product and our vision for the future. At that moment, we established an engineering relationship. They were tremendous assets in our effort to move authorization to a distributed model. Over this process, we had regular meetings with their team, who helped us evolve our design while answering any questions from our engineers. We also leveraged their Slack community, giving our engineers the opportunity to collaborate and ask questions regarding authorization and the Oso framework.

Today, we have our production model up and running, and we have multiple services within our domain that are utilizing Oso as their source of authorization management. But we’re not done. Both engineering teams still meet regularly as our framework and domain models continue to evolve. Naturally, we’re excited to see what comes next.

With that, I would like to give a big shout-out and thanks to Graham, Sam, and the rest of the Oso team for assisting us in “productionalizing” our solution and building the great community over at Oso. Take a bow!

Interested in Learning More?

For more information related to LogTech, International Supply Chain at Wayfair, and a deeper understanding of what our team does, and a few of the challenges we’ve faced, feel free to check out our other blog post: Leading In #LogTech: An Introduction to the Tech Team Behind our Global Logistics Network, and stay on the lookout for more blog posts related to LogTech and International Supply Chain in the future!